In this post I will be exploring some data I found about the Vietnam War.

The data is from The National Archives Catalog

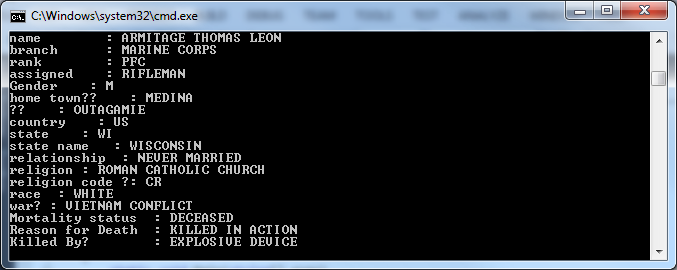

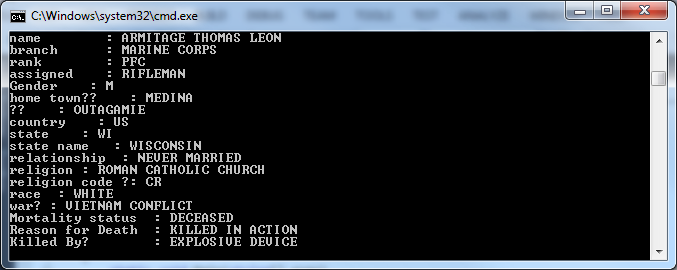

The downloaded data file DCAS.VN.EXT08.DAT contains 58,220 records. Each row appears to be an individual involved in the war. I couldn’t make immediate sense of the cookbook documents, so I proceeded straight to parsing it raw. By eyeballing the values of each column I was able to determine the following attributes per row:

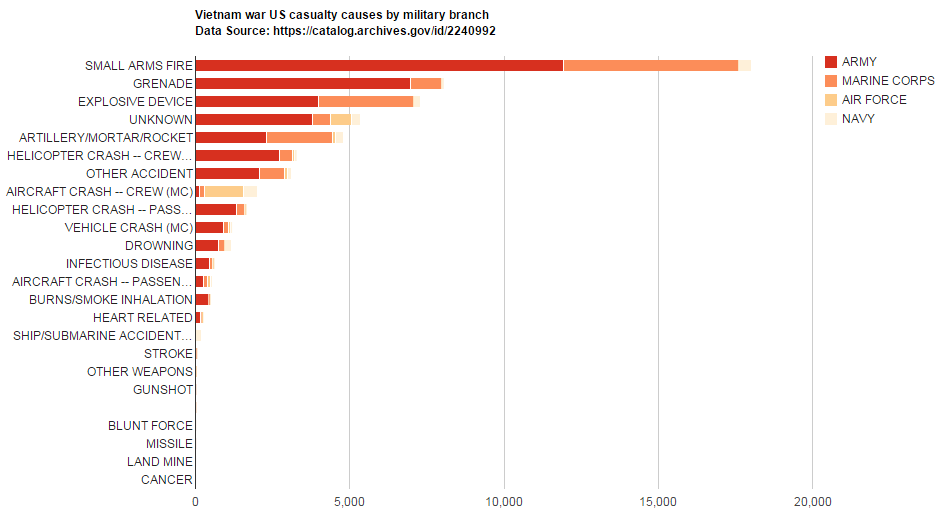

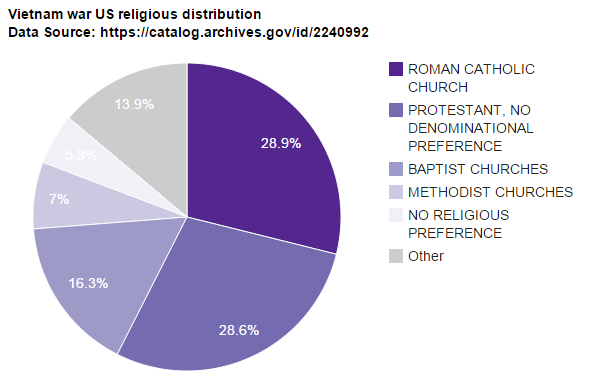

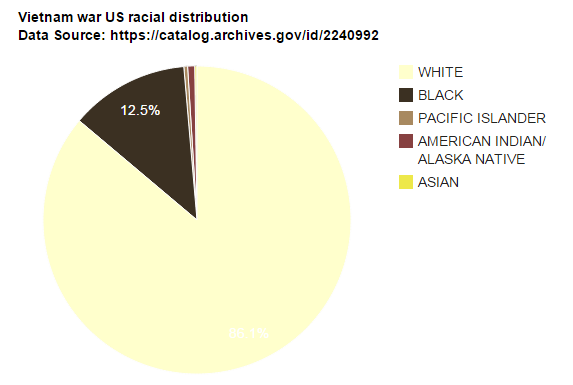

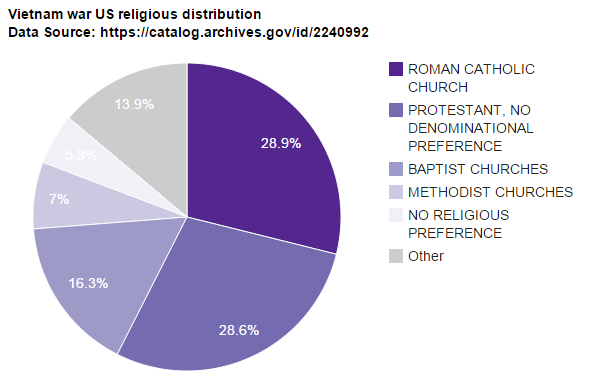

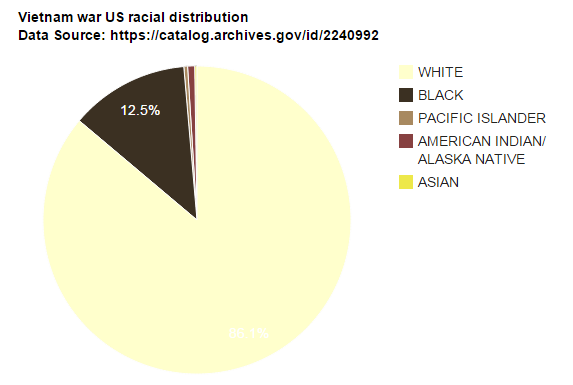

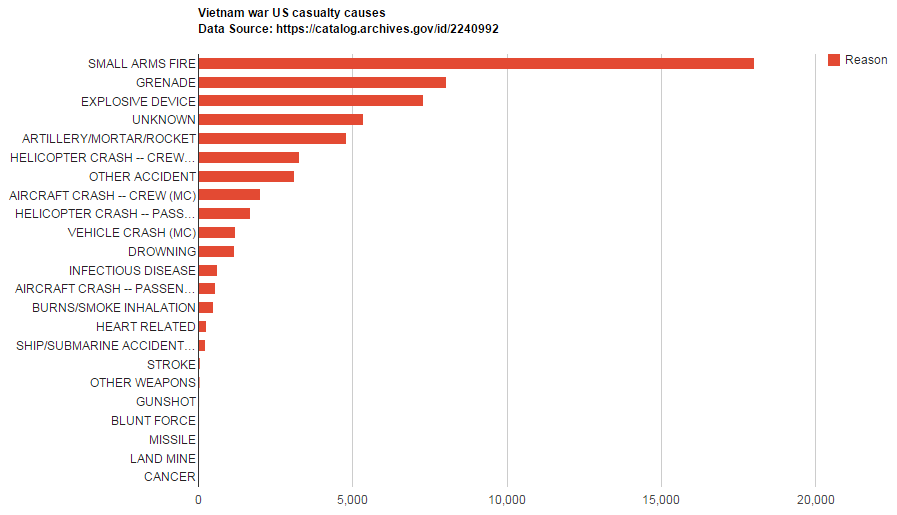

Name, Branch, Rank, Assigned Position, Gender, Hometown, Country, State, Relationship Status, Religion, Race, Mortility Status, and Reason of Death.

C# Console:

Console.BufferHeight = 4000;

Console.WriteLine("Charlie in the Trees");

System.IO.StreamReader myFile =

new System.IO.StreamReader(@"C:\VIETNAM\DCAS.VN.EXT08.DAT");

string vietnamData = myFile.ReadToEnd();

myFile.Close();

string[] lines = vietnamData.Split(new string[] { Environment.NewLine }, StringSplitOptions.None);

int count = 0;

foreach( string line in lines )

{

string[] parts = line.Split('|');

Console.WriteLine("name : " + parts[4]);

Console.WriteLine("branch : " + parts[6]);

Console.WriteLine("rank : " + parts[7]);

/* ... */

Console.WriteLine();

count += 1;

}

Console.WriteLine();

Console.WriteLine();

Console.WriteLine("total " + count); |

Console.BufferHeight = 4000;

Console.WriteLine("Charlie in the Trees");

System.IO.StreamReader myFile =

new System.IO.StreamReader(@"C:\VIETNAM\DCAS.VN.EXT08.DAT");

string vietnamData = myFile.ReadToEnd();

myFile.Close();

string[] lines = vietnamData.Split(new string[] { Environment.NewLine }, StringSplitOptions.None);

int count = 0;

foreach( string line in lines )

{

string[] parts = line.Split('|');

Console.WriteLine("name : " + parts[4]);

Console.WriteLine("branch : " + parts[6]);

Console.WriteLine("rank : " + parts[7]);

/* ... */

Console.WriteLine();

count += 1;

}

Console.WriteLine();

Console.WriteLine();

Console.WriteLine("total " + count);

output

Alright, now that we’ve parsed the data, I typically ask myself these questions:

what is interesting?

what do I want to see?

what might be controversial?

what could invoke the attention of others?

Dictionary<string, int> dictConcepts = new Dictionary<string, int>();

foreach( string line in lines )

{

string[] parts = line.Split('|');

if (parts[43] == "DECEASED")

{

if (dictConcepts.ContainsKey(parts[45]))

{

int cur_count = dictConcepts[parts[45]];

cur_count += 1;

dictConcepts[parts[45]] = cur_count;

}

else

{

dictConcepts.Add(parts[45], 1);

}

}

} |

Dictionary<string, int> dictConcepts = new Dictionary<string, int>();

foreach( string line in lines )

{

string[] parts = line.Split('|');

if (parts[43] == "DECEASED")

{

if (dictConcepts.ContainsKey(parts[45]))

{

int cur_count = dictConcepts[parts[45]];

cur_count += 1;

dictConcepts[parts[45]] = cur_count;

}

else

{

dictConcepts.Add(parts[45], 1);

}

}

}